MEET Digital Culture Center and Scuola di Robotica combine their expertise and visions to create a training initiative: the Immersive Education Program. This program enhances

We asked Professors Antonio Sgorbissa and Carmine Recchiuto of DIBRIS at the University of Genoa about the applications – and usefulness – of ChatCPT in robotics.

Q. Do you think it is an advantage to integrate ChatGPT in a social robot?

Antonio Sgorbissa: Integrating ChatGPT into a social robot can certainly offer significant advantages. Large Language Models, such as those based on OpenAI’s GPT, allow the robot to interact in a more natural and engaging way with human users, thanks to their remarkable ability to understand and generate natural language.

However, it is important to emphasise that we cannot completely leave the control of what the robot says to a generative language model. Those who develop autonomous social robots will continue to be responsible for writing ‘intelligent’ programmes that determine ‘what’ to say, generative models can help with ‘how’ to say it in a natural way.

Q. Could Generative AI optimise human-robot interaction?

Carmine Recchiuto: Without a doubt. One of the most significant limitations of social robots is, at present, their ability to understand and respond in the correct way, advancing the dialogue in a way that is interesting and engaging for the user.

In this sense, Large Language Models, if used with awareness, can become an extremely powerful tool in the hands of robot developers. However, it is not enough to connect a robot to ChatGPT to use these tools in an intelligent way, just as it is not enough to ‘abuse’ PowerPoint animations to make a good presentation. I am sure readers will understand what I mean.

ChatGPT is a tool, and like all tools it needs someone who knows how to use it.

Q. What is the improvement with ChatGPT compared to speech recognition with which some social robots are equipped?

Carmine Recchiuto: Speech recognition systems have different purposes than Natural Language Processing systems or those designed for text generation, such as GPT-based Large Language Models. From a robot’s point of view, these are different and complementary phases: the first phase is aimed at translating the words spoken by the person into text, the second and third are aimed at understanding and producing text. Finally, a fourth phase should be mentioned, which deals with synthesising audio in the appropriate manner. This is a not insignificant problem, if we consider how much information is contained not only in our words, but in the tone, volume, and rhythm of the voice.

What can be compared with GPT are the older rule-based systems for text recognition and production, which are often part of the software accompanying some commercial robotics platforms, such as NAO and Pepper. A rule-based system is much more limited in text comprehension and production because it is essentially based on a long list of case studies: ‘if I understand X I answer Y’, ‘if I understand A I answer B’. Systems like GPT, on the other hand, are trained with a very large number of sentences, and choose how to answer based on probabilistic reasoning. Put simply, they choose the words that are most frequently associated with other words based on the database of sentences they already have. A bit conformist, perhaps.

The result, from the point of view of the naturalness of the dialogue, is definitely in GPT’s favour. But who is in control? Who knows what the system is going to say, if we do not rely on clearly defined rules a priori? Do we really want to let a robot respond using the same words that people would use in a similar context? Systems like ChatGPT have solutions to avoid discriminatory and vulgar language. Therefore, in certain cases, the answer is definitely yes: let ChatGPT decide what is most appropriate to say. In other cases, however, we want the robot to say exactly what we think is right. In all these cases, ‘classical’ rule-based systems will still have a role to play.

Q. Are there reports of a robot designed by ChatGPT. Could ChatGPT also improve the functions of a robot, not just its HMI?

Antonio Sgorbissa: The question is really interesting. Some say that generative language models are not ‘real intelligence’, because they merely make purely probabilistic choices about which words to put together to make complex sentences. But the result is still surprising. There is talk of a ChatGPT-designed robot, but try asking ChatGPT how to tell a person that he/she has a serious illness or what is the best way to break up with a girlfriend or boyfriend, and you will see what reasoning skills and sensitivity it is capable of exhibiting. Recently, I participated in an event organised by the White Bracelets, a voluntary association that offers empathic and spiritual support to people who are ill or at the end of life. In this context, I presented a theatrical performance in which two actors used ChatGPT as a mediator between opposing positions on the use of vaccines. ChatGPT showed an ability to mediate characterised by a wisdom that few humans could display!

Although I am not an expert in language or neuroscience, a question arises for me. Is it possible to make the conjecture that even so-called human intelligence, or at least that part of it that has to do with language, is nothing more than guided by probabilistic rules that tell us which words to approach based on experience during our lifetime? To put it jokingly, is it possible to argue that even human intelligence is not ‘real intelligence’?

Obviously, there is no definition of ‘true intelligence’ that does not refer to human-like intelligence, but the question here is more subtle: whether the ‘stuff’ that artificial and human intelligence are made of is really different or, after all, not something very similar. If so, we will not accept it without suffering, of that I am sure.

MEET Digital Culture Center and Scuola di Robotica combine their expertise and visions to create a training initiative: the Immersive Education Program. This program enhances

For the achievement of the Horizon Europe project results, PRAESIIDIUM, numerous research groups are combining their expertise to develop innovative strategies for the prevention of

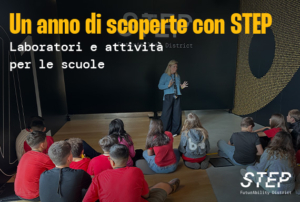

STEP FuturAbility District is a dynamic, interactive experiential journey that allows students to immerse themselves in today’s ongoing digital revolution. For schools, we offer the

The autumn program in the regional Hubs of the Dicolab. Digital Culture project is packed with opportunities for those who want to learn about the

Completa il tuo profilo prima di continuare