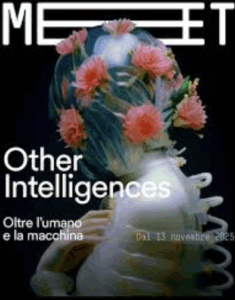

MEET Milan: workshops for children and families on animal intelligences, in sync with the “Other Intelligences” exhibition.

MEET Milan and Scuola di Robotica present thematic workshops for children and teens, taking place alongside the Other Intelligences exhibition. WHERE: Milan, MEET Digital Culture Center